Hi, my name is

Sejal Barshikar.

I build Intelligent systems with Deep Learning.

I'm a Master's student in Computer Science at Northeastern University, specializing in AI/ML with a focus on Natural Language Processing and Computer Vision. I build end-to-end deep learning systems and have published research in code summarization and serverless computing.

About Me

Hello! I'm Sejal, and I'm passionate about building intelligent systems that solve real-world problems. My journey into AI began during my undergraduate studies in India, where I developed a strong foundation in machine learning algorithms and deep learning architectures.

I'm currently pursuing my Master's in Computer Science at Northeastern University, specializing in AI and Machine Learning. I've worked on several projects such as Research Paper Classification using Fine Tuning BERT, Video Content Analyzer and Neural Machine Translation System. I also gained hands-on industry experience as a Data Science Intern at AICTE, where I built ML pipelines processing millions of transaction records. My main focus these days is Deep Learning for Natural Language Processing and Computer Vision.

Tools and Technologies

- Python

- PyTorch

- TensorFlow

- Keras

- Hugging Face

- OpenCV

- Scikit-Learn

- C++

- JavaScript

- SQL

- Git

- MongoDB

Education

Experience

Data Science Intern @ AICTE

December 2024 - May 2025

- Built an end-to-end ETL pipeline processing 5M+ retail transaction records using optimized Pandas workflows reducing data preprocessing time by 60%

- Engineered RFM behavioral features (recency, frequency, monetary) and applied K-Means clustering evaluated across 10+ initializations via Silhouette analysis, identifying 5 distinct customer segments

- Collaborated with a team of 10+ analysts and engineers to define segmentation criteria, validate cluster profiles, and align model outputs with targeted marketing objectives

- Deployed segmentation inference via Flask REST API achieving sub-100ms latency for real-time usage

- Drove 20% increase in conversion rates through segment specific behavioral profiling and personalized recommendations

Projects

Featured Project

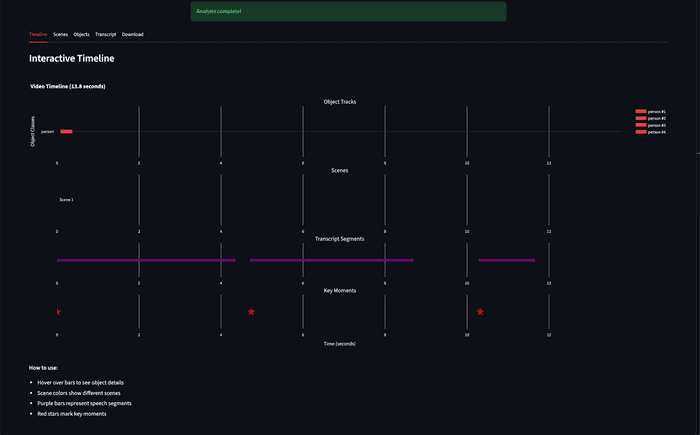

AI-Powered Video Content Analyzer

Engineered a multimodal video analysis pipeline integrating YOLOv8 + BoTSORT object tracking, OpenAI Whisper speech transcription, and CLIP scene understanding to automate semantic extraction across all three modalities simultaneously

Achieved 6x faster than real-time processing on CPU-only inference to analyze a 6-minute video in under 62 seconds with peak memory footprint under 1.7GB enabling deployment on memory-constrained environments

Architected a FastAPI backend with async background job processing and RESTful endpoints and containerized via Docker supporting concurrent video uploads with real-time job status tracking and sub-500ms API response times

- Computer Vision

- NLP

- OpenCV

- PyTorch

- Python

Featured Project

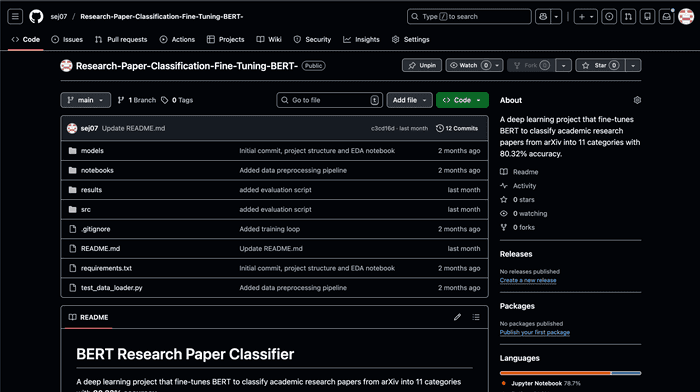

Research Paper Classifier

Fine-tuned BERT-base (109M parameters) on 28K arXiv abstracts for 11 category classification to achieve 80.3% test accuracy(8.8x above random baselines) with 9 of 11 categories exceeding 80% F1

Conducted systematic error analysis via confusion matrix identifying semantic overlap between interdisciplinary categories, documenting category-specific failure modes to guide future dataset refinement

Optimized training for Apple Silicon MPS with gradient clipping, linear warmup scheduling, and AdamW, achieving stable convergence in a single epoch across 3,549 batches on a resource-constrained environment

- PyTorch

- BERT

- Transformers

- Python

Featured Project

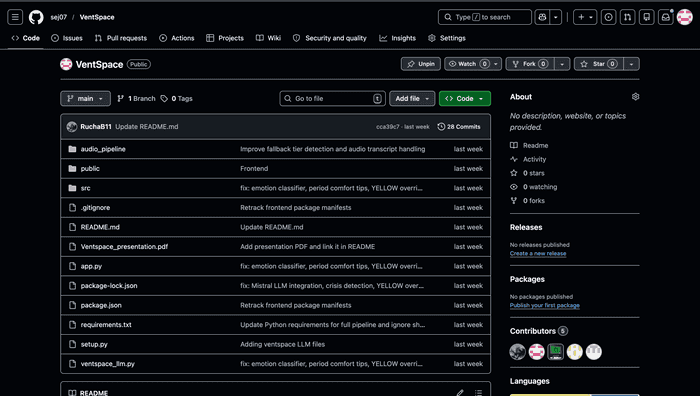

VentSpace

Engineered audio emotion pipeline training SVM on 35 librosa features across 1,056 labeled samples from RAVDESS and CREMA-D achieving 84% accuracy with 0.87 precision on frustration class

Integrated OpenAI Whisper transcription with cycle-aware context to deliver personalized weekly mental health reports via a multimodal fusion pipeline

Designed a public REST API with structured JSON output across 5 fields enabling clean multimodal fusion across audio, text, and cycle-aware pipelines

- Python

- OpenAI Whisper

- REST API

- Multimodal

Other Projects

view the archiveNeural Machine Translation System

Reimplemented ”Sequence to Sequence Learning with Neural Networks” paper using TensorFlow on English-French WMT14 dataset with 40,000 sentence pairs

Designed 4-layer LSTM encoder-decoder architecture using Bahdanau attention mechanism to handle variable-length se- quences up to 50 tokens and 512-dimensional hidden state representations

Optimized training pipeline implementing gradient clipping, teacher forcing with decay schedule, and adaptive learning rate to reduce the convergence time by 30% and prevent gradient explosion

LifeLink - Organ Transplant Management System

Comprehensive database-driven organ transplant management system handling the complete donation lifecycle. Designed 18 normalized (3NF) tables with automated matching algorithms using weighted scoring (Blood: 30%, HLA: 25%, Wait Time: 20%). Implemented 6 automated triggers, 4 stored procedures, and 4 custom functions for real-time priority-based waitlist management and organ allocation with viability tracking.

CIFAR-10 Image Classification

Custom CNN architecture with 1.15M parameters achieving 64.62% test accuracy. Implemented batch normalization, dropout regularization, and optimized training pipeline with data augmentation techniques.

Character-Level Text Generation with LSTM

Stacked LSTM model with 512 hidden units per layer for Shakespeare-style text generation. Trained on 1.1M character sequences with temperature sampling for controllable generation.

Plant Disease Classification using CNN

Built a CNN model to classify plant leaf images into disease categories using the PlantVillage dataset (54,000+ images). Achieved 97% training accuracy and 87% validation accuracy with a custom architecture featuring data augmentation and transfer learning techniques. Developed a predictive system for real-time disease detection to support agricultural productivity.

Number to Words Translation using Seq2Seq

Implemented a sequence-to-sequence model with stacked LSTM encoder-decoder architecture to translate numbers into their word equivalents. Built custom synthetic dataset with text preprocessing and tokenization pipeline. Trained with teacher forcing for 150 epochs, demonstrating how encoder-decoder models learn structured sequence translations without attention mechanisms.

Research & Publications

What’s Next?

Get In Touch

I'm currently seeking Summer 2026 ML Engineering internships where I can apply my skills in deep learning, NLP, and computer vision to build impactful AI systems. Whether you have an opportunity, a question, or just want to connect, feel free to reach out!

Say Hello